Step 2000: train WeightedCategor圜rossEntropy | 0.38129005 Step 2000: Ran 500 train steps in 5.35 secs Step 1500: eval WeightedCategoryAccuracy | 0.82109375

Step 1500: eval WeightedCategor圜rossEntropy | 0.35207348 Step 1500: train WeightedCategor圜rossEntropy | 0.41843575 Step 1500: Ran 500 train steps in 4.80 secs Step 1000: eval WeightedCategoryAccuracy | 0.83750000 Step 1000: eval WeightedCategor圜rossEntropy | 0.35451687 Step 1000: train WeightedCategor圜rossEntropy | 0.42949259 Step 1000: Ran 500 train steps in 5.03 secs Step 500: eval WeightedCategoryAccuracy | 0.74062500 Step 500: eval WeightedCategor圜rossEntropy | 0.49253047 Step 500: train WeightedCategor圜rossEntropy | 0.62914723 Step 500: Ran 499 train steps in 5.77 secs Step 1: eval WeightedCategoryAccuracy | 0.56562500 Step 1: eval WeightedCategor圜rossEntropy | 0.71843582 Step 1: train WeightedCategor圜rossEntropy | 1.33800304 Layers with trainable weights like Embedding need to be initialized with the signature (shape and dtype) of the input, and then can be run by calling them. """Returns tensor of newly initialized embedding vectors.""" del input_signature shape_w = ( self. weights, x, axis = 0, mode = 'clip')ĭef init_weights_and_state( self, input_signature): """Returns embedding vectors corresponding to input token IDs. _kernel_initializer = kernel_initializer def forward( self, x): _d_feature = d_feature # feature dimensionality self. kernel_initializer: Function that creates (random) initial vectors for the embedding. d_feature: Dimensionality/depth of the output vectors. The layer will assign a unique vector to each ID in `range(vocab_size)`. Args: vocab_size: Size of the input vocabulary. """Returns an embedding layer with given vocabulary size and vector size. """Trainable layer that maps discrete tokens/IDs to vectors.""" def _init_( self, This is done in the trax.fastmath package thanks to its backends - JAX and TensorFlow numpy. We also want to automatically compute gradients of functions on tensors. In Trax we want numpy operations to run very fast, making use of GPUs and TPUs to accelerate them.

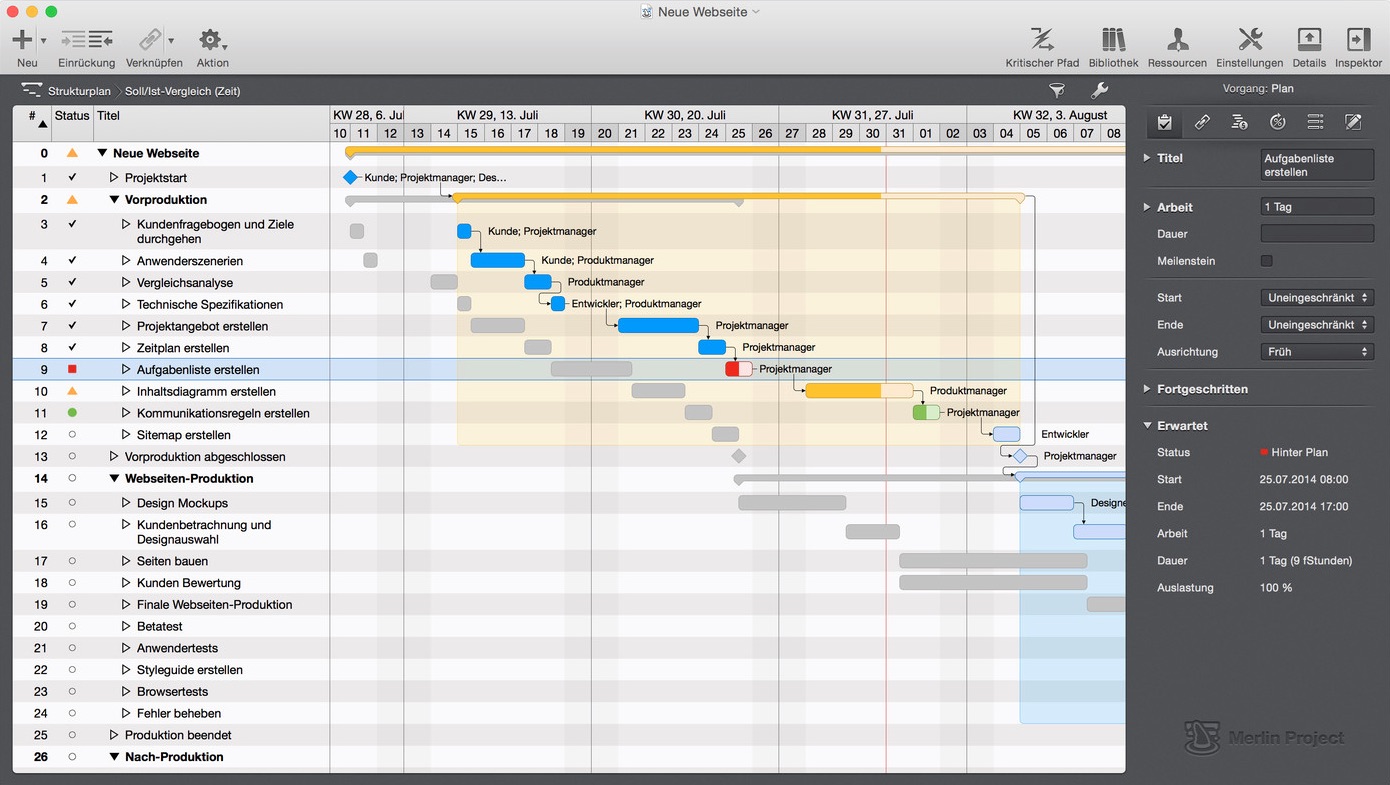

TIME TRAKS MERLIN PROJECT HOW TO

You should take a look at the numpy guide if you don't know how to operate on tensors: Trax also uses the numpy API for that. The basic units flowing through Trax models are tensors - multi-dimensional arrays, sometimes also known as numpy arrays, due to the most widely used package for tensor operations - numpy. You can learn here how Trax works, how to create new models and how to train them on your own data. It runs without any changes on CPUs, GPUs and TPUs. Or as a binary from the shell, which can be more convenient for training large models. You can use Trax either as a library from your own python scripts and notebooks Trax has bindings to a large number of deep learning datasets, including New models like the Reformer and new RL algorithms like AWR. It is also actively used for research and includes Trax includes basic models (like ResNet, LSTM, Transformer) and RL algorithms detokenize( tokenized_translation,Įs ist schön, heute neue Dinge zu lernen! # De-tokenize, tokenized_translation = tokenized_translation # Remove batch and EOS. Model, tokenized, temperature = 0.0) # Higher temperature: more diverse results. tokenized = tokenized # Add batch dimension. sentence = 'It is nice to learn new things today!' tokenized = list( trax. init_from_file( 'gs://trax-ml/models/translation/ende_', N_heads = 8, n_encoder_layers = 6, n_decoder_layers = 6, # Pre-trained model config in gs://trax-ml/models/translation/ende_wmt32k.gin model = trax.

TIME TRAKS MERLIN PROJECT CODE

Deep N-Gram models : Implementation of deep n-gram models trained on Shakespeares worksĮxecute the following cell (once) before running any of the code samples.Named Entity Recognition using Reformer : Uses a Kaggle dataset for implementing Named Entity Recognition using the Reformer architecture.trax.data API explained : Explains some of the major functions in the trax.data API.We especially love notebooks that explain how models work and show how to use them to solve problems! We welcome contributions to Trax! We welcome PRs with code for new models and layers as well as improvements to our code and documentation. Walkthrough: how Trax works, how to make new models and train on your own data.Features and resources: API docs, where to talk to us, how to open an issue and more.Run a pre-trained Transformer: create a translator in a few lines of code.This notebook ( run it in colab) shows how to use Trax and where you can find more information. It is actively used and maintained in the Google Brain team. Trax is an end-to-end library for deep learning that focuses on clear code and speed.

Trax - Deep Learning with Clear Code and Speed